What is Kimi, and why is a Chinese AI model suddenly matching the American flagships?

A Chinese open-weight model has matched the paid American flagships on selected benchmarks, at a fraction of the direct-API cost. Here is why that matters.

A Chinese lab most British readers have never heard of has just released a free artificial intelligence model that can match the paid versions of ChatGPT, Claude, and Gemini on one of the industry’s hardest tests. Its name is Kimi, and the version released on 20 April 2026 has quietly changed how the market looks.

At a glance

- Developer

- Moonshot AI (China)

- Released

- K2.6, 20 April 2026

- What it is

- Large language model, open weight, native multimodal

- Closest rivals

- Claude Opus 4.6, GPT-5.4, Gemini 3.1 Pro

- Pricing

- Free to download. $0.95 per million input tokens and $4.00 per million output tokens via Moonshot’s direct API. Third-party hosts offer lower rates.

- Where to try it

- kimi.com

Kimi is the consumer-facing name for a family of AI models built by Moonshot AI, a Beijing-based AI company backed by Alibaba, Tencent, and other Chinese investors. The new release is K2.6. Think of it as the Chinese industry’s answer to the paid chat and coding assistants that dominate generative AI’s early phase. It runs in a browser, on a phone, through an API for developers, and through a command-line tool for engineers.

The single most important thing to understand about K2.6 is that the weights are free. Weights is the industry term for the numbers inside the model that do the actual thinking. When a company releases open weights, any team with enough hardware can download the model and run it on their own computers, with no subscription, no rate limit, and no data leaving their building. That is not how Claude, ChatGPT, or Gemini work. Those are paid services that live on their makers’ servers. Moonshot has published K2.6 under a Modified MIT licence, which permits commercial use with one notable threshold. Products exceeding one hundred million monthly active users, or twenty million dollars in monthly revenue, must display a visible Kimi K2.6 credit. Everyone smaller than that is free to ship.

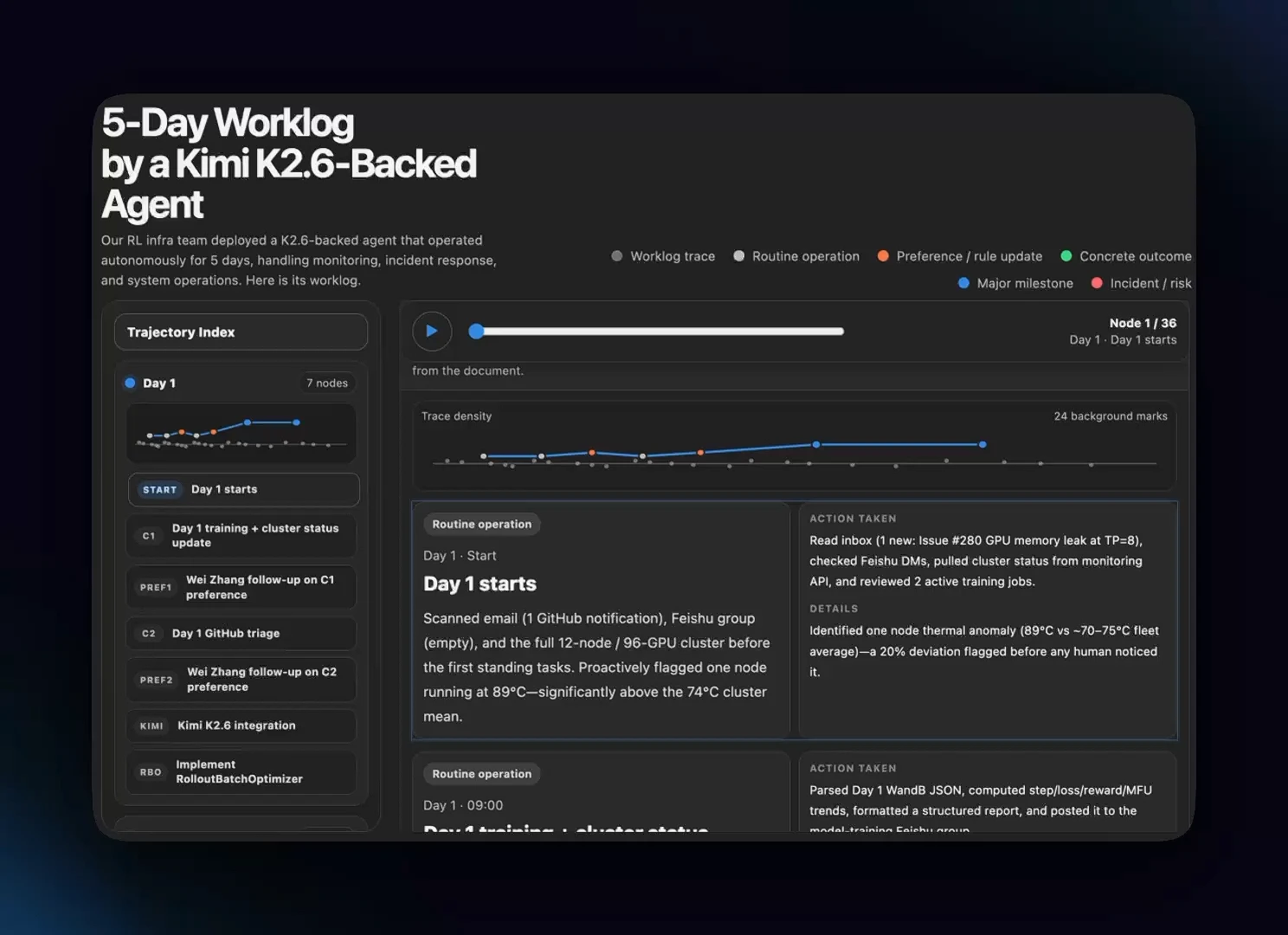

The second thing to understand is what K2.6 is built to do. Most of the famous American models are generalists. They write, translate, summarise, draft code, and answer questions. K2.6 does all of that too, but it is tuned for what the industry calls agentic work. An agent is a model that does not just answer. It plans, calls tools, reads files, runs code, checks the result, and then tries again. According to Moonshot’s launch materials, K2.6 can keep one of these loops running for more than twelve hours, making more than four thousand tool calls in a single session, and can coordinate up to three hundred specialist sub-agents working in parallel. Those are vendor-reported figures, but they describe the profile a developer building an automated engineering team, a research assistant, or a browsing agent actually wants.

For everyday users, Kimi looks and feels like any other chat product. You open the site, type a question, and get an answer. What sits underneath is a mixture-of-experts design with one trillion total parameters, thirty-two billion of which are active for any given token, and a context window of two hundred and sixty-two thousand tokens. That is smaller than the million-token windows offered by Claude Opus 4.6, GPT-5.4, and Gemini 3.1 Pro, but still large enough to read a full-length book in one go. It also accepts images, charts, and screenshots natively, useful for anyone who works with slides, PDFs, or research papers.

One caveat worth flagging up front. A one-trillion-parameter model does not run on a laptop. Moonshot’s free-to-download framing is literally accurate, but serving the full model at useful speed calls for substantial GPU infrastructure, typically a cluster of data-centre cards. Most UK businesses will touch K2.6 through the Moonshot API, through a third-party provider such as OpenRouter or Cloudflare Workers AI, or through a managed version on Hugging Face inference endpoints. The value of the open release, for most British teams, is not that they will run it themselves but that somebody else will, at prices the market has not seen before.

Nothing on the market does exactly what Kimi K2.6 does at open-weight pricing, but Claude Opus 4.6 leads on polished assistant behaviour, GPT-5.4 remains the rival to beat on raw coding benchmarks, and Gemini 3.1 Pro matches the American flagships on large-context work while pushing hard on multimodal reasoning.

| Capability | Kimi K2.6 | Claude Opus 4.6 | GPT-5.4 | Gemini 3.1 Pro |

|---|---|---|---|---|

| Context window | 262K tokens | 1M tokens | 1M tokens | 1M tokens |

| Multimodal inputs | Yes, native vision via MoonViT | Yes | Yes | Yes |

| Agent and long-horizon runs | 300 sub-agents, 4,000+ steps, 12+ hour sessions (vendor-reported) | Native tool use, long runs | Tool use, long runs | Tool use, long runs |

| Coding strength (SWE-Bench Pro, vendor-reported) | 58.6 | 53.4 | 57.7 | 54.2 |

| Pricing (per M tokens, direct API) | $0.95 in / $4.00 out | $5.00 in / $25.00 out | $2.50 in / $15.00 out | $2.00 in / $12.00 out |

| Open weights | Yes, Modified MIT licence | No | No | No |

The misconception worth naming

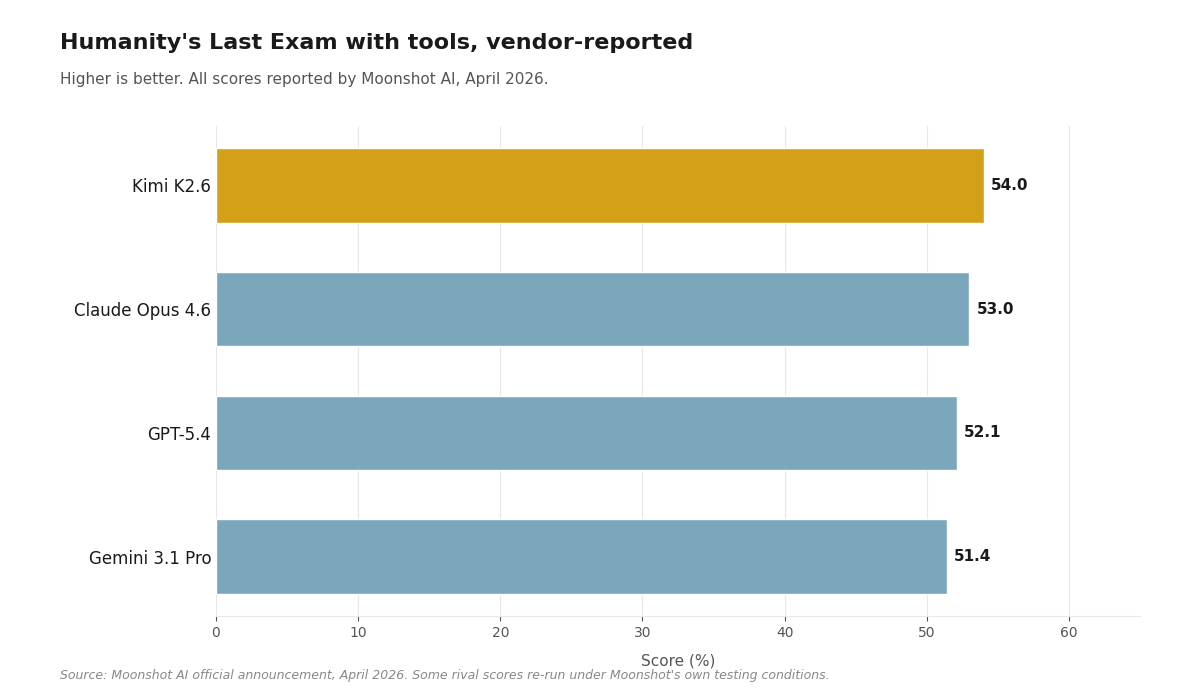

Most British readers assume a free Chinese model must be meaningfully worse than the paid American flagships. That assumption was roughly correct a year ago. It is no longer. On Humanity’s Last Exam, a benchmark specifically designed to be hard enough to humble frontier models, K2.6 with tools scores 54.0 per cent. Claude Opus 4.6 scores 53.0, GPT-5.4 scores 52.1, and Gemini 3.1 Pro scores 51.4. Those numbers, and every other score in this post, are reported by Moonshot itself, with some rival figures re-run under Moonshot’s own testing conditions rather than drawn from the American labs directly. The distinction matters. The release shows the gap has closed enough for an open model to sit in the same conversation as the paid flagships, not that it has definitively overtaken them. On software engineering tasks, K2.6 edges past GPT-5.4 on the demanding SWE-Bench Pro test, 58.6 to 57.7, with Claude Opus 4.6 at 53.4 and Gemini 3.1 Pro at 54.2, again on Moonshot’s own runs. None of this makes the American models obsolete. It does mean the reflex of equating free with meaningfully worse is out of date.

Humanity’s Last Exam with tools, vendor-reported

Humanity’s Last Exam with tools rewards models that can reason and use outside resources, much like a student with access to a library. Higher is better. All scores reported by Moonshot AI, April 2026; some rival figures re-run under Moonshot’s own testing conditions.

What this means for you

The release matters even if you never plan to touch the command line. For any British business that currently pays for Claude or ChatGPT at scale, K2.6 now offers a genuine alternative with direct-API pricing several times below the paid American tier, and cheaper still through third-party hosts. For developers, it removes the last practical excuse for not experimenting with long-horizon agents. And for the wider market, it is the clearest evidence yet that the gap between the paid American tier and the free open-weight tier has become small enough to reshape pricing conversations.

- UK teams building coding agents now have a genuinely competitive open-weight option accessible through domestic or European hosts, reducing reliance on US-only vendors and trimming a large cost line.

- Small and mid-sized firms can experiment with long-horizon agents through an API without committing to Anthropic or OpenAI enterprise pricing.

- Expect downstream pressure on flagship pricing from OpenAI, Anthropic, and Google as the open-weight tier closes the quality gap.