GPT-5.5 lands with stronger agentic coding and a steep API price jump

GPT-5.5 arrived on 23 April 2026 with meaningful gains on agentic coding benchmarks and API pricing that has risen substantially from GPT-5.4. Here is what changed and who it is for.

OpenAI’s newest model makes clear gains on long-horizon coding tasks, but the API pricing has risen substantially from its predecessor.

OpenAI released GPT-5.5 on 23 April 2026, and the release carries two distinct stories. On one side, there are genuine improvements in how the model handles complex, multi-step coding tasks and extended autonomous runs. On the other, developers working with the API will pay substantially more than they did for GPT-5.4. Both facts deserve equal attention before you decide whether to upgrade.

At a glance

- Developer

- OpenAI (United States)

- Released

- GPT-5.5, 23 April 2026; GPT-5.5 Pro same day; API available 24 April 2026

- What it is

- Frontier language model optimised for agentic coding, computer use, and long-horizon task completion

- Closest rivals

- Claude Opus 4.7, DeepSeek-V4-Pro

- Pricing

- API from $5.00 input / $30.00 output per million tokens (standard); ChatGPT Plus from $20/month

- Where to try it

- chatgpt.com

GPT-5.5 sits within OpenAI’s GPT-5 series and is positioned as their primary model for agentic workflows: tasks that require the model to plan, execute, and iterate over multiple steps without constant human prompting. Think of it as the difference between a model that answers a question and one that can carry a project through several stages independently. GPT-5.5 leans into the second category, and the benchmarks reflect that focus.

It launches alongside GPT-5.5 Pro, a higher-accuracy variant aimed at users who need the most capable version of the model for complex reasoning and coding. Standard GPT-5.5 is available via the ChatGPT Plus plan at $20 per month. GPT-5.5 Pro is available through the $200 per month ChatGPT Pro subscription, and also on the Business plan at $25 to $30 per user per month. Both are also accessible via the API, where pricing differs significantly between the two variants.

OpenAI describes GPT-5.5 as optimised for coding, agentic computer use, online research, data analysis, and multi-tool task completion. Within Codex, OpenAI’s coding-focused environment, the model operates with a 400,000-token context window. For direct API access, OpenAI markets a context window of up to one million tokens, though one secondary source reports the measured actual limit as closer to 920,000 tokens. The official model documentation at developers.openai.com should be checked against any specific system that depends on the upper bound.

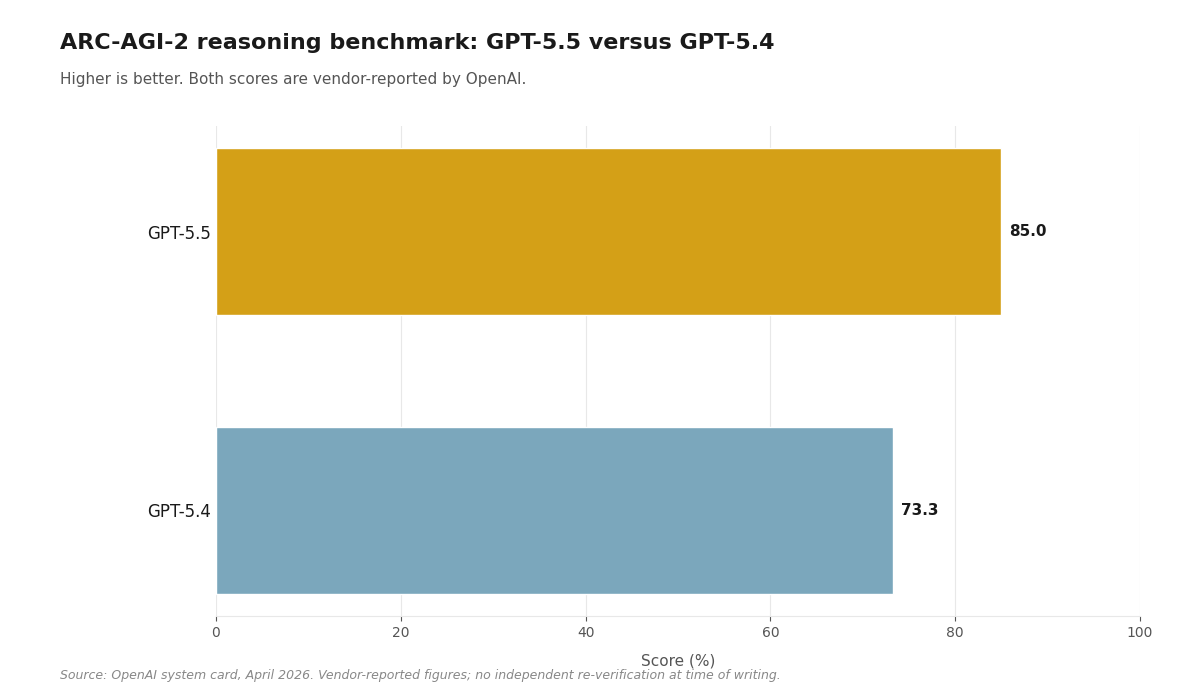

The main performance story centres on agentic coding and complex reasoning. According to OpenAI’s published benchmark table in their system card, GPT-5.5 scores 85.0% on ARC-AGI-2, compared to 73.3% for GPT-5.4. That 11.7 percentage point gain is the largest single-generation improvement OpenAI has shown on this benchmark. On SWE-Bench Pro, which measures the model’s ability to resolve real software engineering tasks from GitHub repositories, OpenAI reports a score of 58.6%. On GPQA Diamond, a demanding graduate-level science benchmark, the reported figure is 93.6%. All of these scores come from OpenAI’s own system card and have not been independently re-verified by a third party at the time of writing.

OpenAI also claims that GPT-5.5 uses around 40% fewer output tokens to complete equivalent Codex tasks compared to GPT-5.4, at the same speed. This is a vendor-reported figure. If it holds in production, the effective cost per completed task would be lower than the headline token price implies. The claim has not been independently tested.

One notable absence in the launch materials: OpenAI has not published the parameter count or detailed architectural specification for GPT-5.5. Characterisations of the model as a post-training upgrade on the GPT-5 base come from secondary sources, not from OpenAI’s official documentation. It is worth treating architectural framing from third parties with some caution.

Nothing on the market does exactly what GPT-5.5 does across coding, computer use, and long-horizon research within a single unified product. Claude Opus 4.7 from Anthropic covers similar territory on complex reasoning and agentic tasks. DeepSeek-V4-Pro offers strong agentic capability at a fraction of the API cost, though it is a proprietary API model rather than an open-weight one.

| Capability | GPT-5.5 | Claude Opus 4.7 | DeepSeek-V4-Pro |

|---|---|---|---|

| Context window | Up to 1M tokens (API); 400K in Codex | 200K tokens | 1M tokens |

| Multimodal inputs | Yes (text, images, files) | Yes (text, images, documents) | No (text only) |

| Agent / long-horizon runs | Yes (Codex, computer use) | Yes (computer use) | Yes (function calling, tool use) |

| Coding strength | SWE-Bench Pro 58.6% (vendor-reported, OpenAI) | No directly comparable published figure at time of writing | No directly comparable published figure at time of writing |

| Pricing (per M tokens, direct API) | $5.00 in / $30.00 out (standard); $30.00 in / $180.00 out (Pro) | $5.00 input / $25.00 output per million tokens (standard API; source: Anthropic docs, April 2026) | $1.74 in / $3.48 out (limited-time 75% off until 5 May 2026) |

| Open weights | No (proprietary) | No (proprietary) | No (API-only; DeepSeek-V3 base is open-weight) |

The misconception worth naming

Most readers will see the ARC-AGI-2 score of 85.0% and read it as the model being 85% of the way to artificial general intelligence. That is not what the benchmark measures. ARC-AGI-2 is a test of fluid reasoning on novel visual pattern tasks. Improvement on this benchmark indicates better generalisation on its specific task type, which is meaningful, but it does not translate directly to a broader claim about general capability. The more operationally relevant figure for developers is the SWE-Bench Pro score of 58.6%, which measures performance on actual software engineering work from real repositories. Even there, the gap between benchmark performance and production reliability depends heavily on the specific codebase and task structure. Benchmark leads of this kind are worth noting; they are not the whole story.

What this means for you

The pricing shift is the most immediate practical consideration. At $5.00 per million input tokens and $30.00 per million output tokens for the standard API variant, GPT-5.5 represents a substantial increase from earlier GPT-5 series pricing. For GPT-5.5 Pro, the API cost rises to $30.00 input and $180.00 output per million tokens, which places it firmly at the top of the frontier model pricing range. OpenAI’s vendor-reported claim of 40% fewer output tokens for Codex tasks would, if verified in your workload, meaningfully reduce the effective cost per completed task.

- If you are building agentic coding workflows with Codex, GPT-5.5 is the clearest upgrade path right now: the SWE-Bench Pro and ARC-AGI-2 improvements are directly relevant, and the model is already integrated into OpenAI’s tooling. Test the token efficiency claim against your own tasks before assuming it translates.

- If API cost sensitivity is high, DeepSeek-V4-Pro offers capable agentic performance at $1.74 input and $3.48 output per million tokens (with a 75% limited-time discount through 5 May 2026). It lacks multimodal input and the tight Codex integration, but for text-based agentic tasks the cost difference is significant.

- If you are on the ChatGPT Plus plan at $20 per month, you already have access to standard GPT-5.5 at no extra cost. For most personal and small-team use cases, this is the natural starting point before considering whether the API or the Pro tier adds enough to justify the additional spend.

According to OpenAI’s published benchmark data, GPT-5.5 scores 85.0% on ARC-AGI-2, up from 73.3% for GPT-5.4. That 11.7 percentage point gain is the largest single-generation improvement OpenAI has reported on this benchmark, and it arrives alongside API output pricing that has risen to $30.00 per million tokens for the standard variant.