Anthropic’s new model tier finds 27-year-old bugs. Who can actually use Claude Mythos Preview?

Claude Mythos Preview is Anthropic’s most capable model, gated to security researchers. Here is what it can do, and why it is not publicly available.

Anthropic has built a model it will not release. Claude Mythos Preview is its most capable AI to date, already finding security flaws that have sat undetected in major operating systems for decades. Here is what it can do, why access is gated, and what it means if you work in software or security.

At a glance

| Developer | Anthropic (USA) |

| Released | Gated research preview, 7 April 2026 |

| What it is | Frontier language model; new tier above Opus, built for long-horizon agentic and security tasks |

| Closest rivals | Claude Opus 4.7 (publicly available); GPT-5.5 (no published side-by-side benchmark against Mythos) |

| Pricing | $25 per million input tokens / $125 per million output tokens, post-preview to Project Glasswing participants only. No public retail pricing published. |

| Where to learn more | anthropic.com/glasswing |

Claude Mythos Preview sits in a new tier above Opus in Anthropic’s model family, described by the company as larger and more intelligent than the Opus line. Architecture and parameter count have not been published. The model accepts text and image inputs, produces text output, carries a one-million-token context window, and supports extended reasoning. It launched alongside Project Glasswing, a joint initiative pairing the model with security teams working on real-world software vulnerabilities.

Founding partners include Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks, alongside more than 40 additional organisations that build or maintain critical infrastructure software. Anthropic provides $100 million in usage credits for programme participants, plus $4 million in donations to open-source security foundations: $2.5 million to Alpha-Omega and the OpenSSF via the Linux Foundation, and $1.5 million to the Apache Software Foundation. Access to the model runs through the Claude API, Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry.

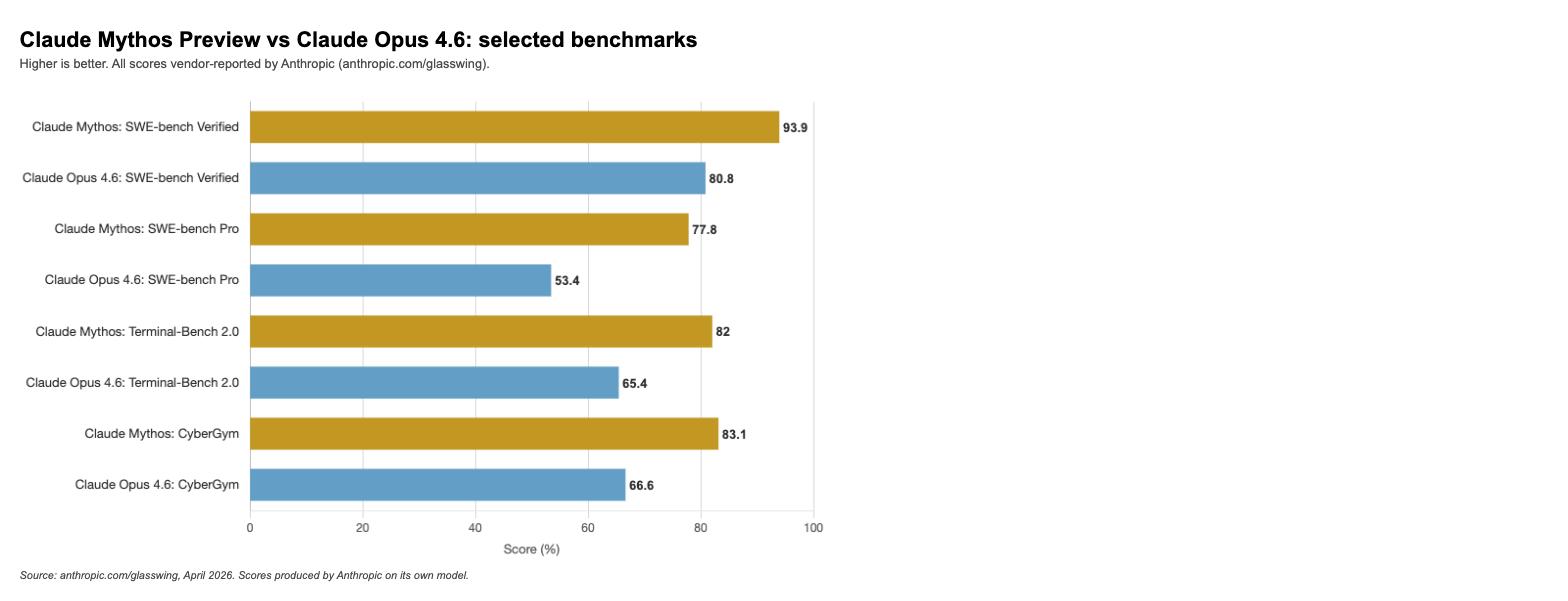

The benchmark picture. The capability benchmarks come almost entirely from Anthropic running its own model under its own testing conditions. According to Anthropic’s published data at anthropic.com/glasswing, Mythos Preview scores 93.9% on SWE-bench Verified against 80.8% for Claude Opus 4.6. On SWE-bench Pro, a harder variant designed to reduce memorisation risk, the gap widens: 77.8% versus 53.4%. Anthropic states that memorisation screens were applied and the lead holds after excluding flagged problems. On Terminal-Bench 2.0, which tests sustained agentic coding over long sessions, Mythos scores 82.0% versus 65.4% for Opus 4.6.

The UK AI Security Institute (AISI) provided the only independent evaluation, covering cybersecurity tasks. In expert-level capture-the-flag tests that no model could complete before April 2025, Mythos achieved a 73% success rate. The AISI also ran The Last Ones (TLO), a 32-step corporate network attack simulation estimated to require around 20 human hours. Mythos was the first model to complete it from start to finish, doing so in 3 of 10 attempts, averaging 22 of 32 steps. Claude Opus 4.6 averaged 16 steps in the same test. (Source: aisi.gov.uk, 13 April 2026.)

Anthropic’s own Red Team tests, reported at red.anthropic.com/2026/mythos-preview/, describe further results. Mythos developed 181 working JavaScript shell exploits from Firefox 147 engine vulnerabilities; Opus 4.6 produced 2 from several hundred attempts under the same conditions. On an internal OSS-Fuzz test across approximately 1,000 repositories, Mythos achieved full control-flow hijack — the highest severity tier — on 10 fully patched targets. All of these results are vendor-reported by Anthropic.

Real-world bugs found during the programme include a 27-year-old OpenBSD remote crash flaw, a 16-year-old integer overflow in FFmpeg’s H.264 codec, chained Linux kernel vulnerabilities escalating user access to machine control, and a 17-year-old FreeBSD NFS remote code execution flaw that Mythos exploited in under half a day at a cost of under $1,000.

How it compares to what you can actually use. Nothing on the market does quite what Claude Mythos Preview does in a controlled defensive security setting. The comparison below uses the figures Anthropic published at launch, which benchmarked Mythos against Claude Opus 4.6. Claude Opus 4.7 has since been released as the current publicly available flagship model with improved scores across several benchmarks, but is not included in Anthropic’s Glasswing system card.

| Capability | Claude Mythos Preview | Claude Opus 4.6 | GPT-5.5 |

|---|---|---|---|

| Context window | 1M tokens | See docs.anthropic.com | See platform.openai.com/docs |

| Multimodal inputs | Text, image | Text, image | Text, image |

| Reasoning support | Yes | Yes | Yes |

| Coding — SWE-bench Verified (vendor-reported) | 93.9% (Anthropic, anthropic.com/glasswing) | 80.8% (Anthropic, same source) | Not in Anthropic’s Glasswing system card; see platform.openai.com |

| Independent cybersecurity eval | AISI CTF: 73% success rate (independent, April 2026) | Not evaluated by AISI in this category | Not evaluated by AISI in this category |

| Pricing (per M tokens, API) | $25 in / $125 out (Glasswing participants, post-preview only) | See anthropic.com/pricing | See platform.openai.com/pricing |

| Public availability | Gated research preview only | Generally available | Generally available |

| Open weights | No | No | No |

Note: Claude Opus 4.7 is now Anthropic’s current publicly available flagship model and has improved on several of the Opus 4.6 figures above. The table uses the Opus 4.6 figures published in Anthropic’s Glasswing system card because those are the direct comparisons Anthropic ran against Mythos Preview.

The misconception worth naming. Most people reading about Mythos assume it represents a general leap forward across all AI tasks. The benchmark data does not quite support that framing. On GPQA Diamond, a hard graduate-level reasoning test, Mythos scores 94.6% against Opus 4.6’s 91.3%, a 3.3-percentage-point margin. On BrowseComp, the gap is 86.9% versus 83.7%. These are real but incremental improvements, not a step change in general capability.

Where Mythos is genuinely in a different category from every other publicly tested model is cybersecurity: autonomous vulnerability discovery and exploitation at a scale and sophistication that an independent body, the AISI, confirmed cannot yet be matched. Anthropic itself notes that standard benchmarks mostly saturate at Mythos’s capability level, which is why its testing shifted to real-world novel tasks. A model that is modestly better at general reasoning but categorically more capable at autonomous cyberattack is a different kind of product from a simple upgrade.

Source: anthropic.com/glasswing, April 2026. All benchmark scores in the system card are vendor-reported by Anthropic on its own model. Independent evaluation by the AISI covers cybersecurity tasks only.

What this means for you. If you work in software security, infrastructure, or open-source maintenance, the practical starting point is anthropic.com/glasswing, where Anthropic describes the programme and how to register interest. A public report on vulnerabilities found and fixed is due within 90 days of the programme’s launch.

For everyone else, Claude Opus 4.7 remains the most capable generally available Claude model; pricing and specs are at anthropic.com/pricing. Project Glasswing is aimed at organisations that build or maintain critical software infrastructure. The programme is not designed for individual developers or small teams without a security research mandate.

Every capability benchmark cited in Anthropic’s announcement was produced by Anthropic on its own model. The AISI’s independent evaluation covers cybersecurity tasks only; read the full report at aisi.gov.uk before drawing conclusions about general capability. Broader access to Mythos, if it comes, will likely remain gated. Anthropic plans new safeguards alongside a future Opus release, and a Cyber Verification Program for security professionals is in development.

Images: Anthropic / Project Glasswing. Used for editorial reporting purposes.