What is a large language model (LLM)?

When people talk about ChatGPT, Claude, or Gemini, they are really talking about a large language model. LLM is the term that describes what these systems are underneath the friendly chat window. Take ten minutes to understand the basics. It explains both what they do brilliantly and why they sometimes get things badly wrong.

Break the name down and the mystery fades. Language model: a system that has learned patterns in language. Large: trained on an enormous amount of text. That is genuinely it.

A large language model is a statistical model of language. It is built by processing more text than any human could read in thousands of lifetimes. It learns the patterns of how words, sentences, and ideas relate to each other. The output feels like thinking because language carries so much of our thinking inside it.

How a large language model actually learns

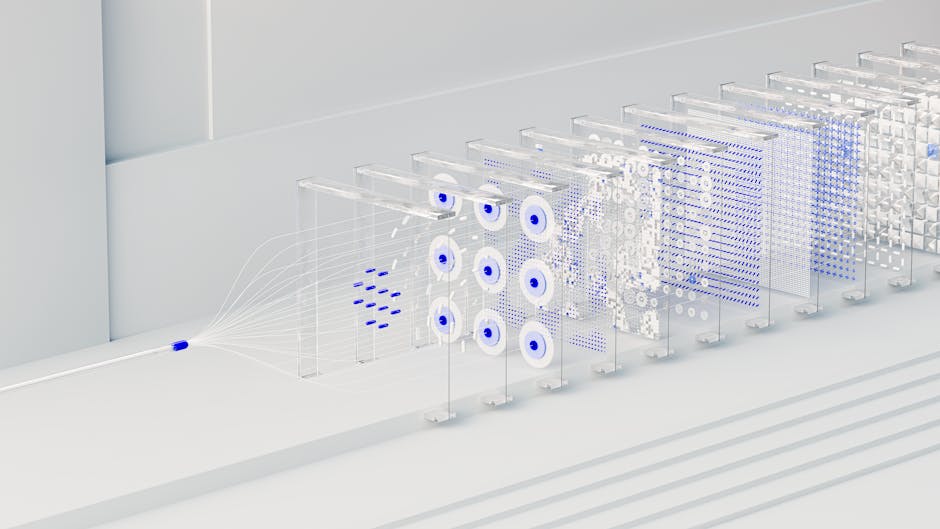

The training task sounds almost childishly simple. Predict the next word. Given a sequence of text, what word is most likely to come next? Do this billions of times across trillions of words.

The training data comes from books, Wikipedia, court filings, code repositories, academic papers, and random internet conversations. Something surprising happens along the way. The model does not only learn to predict words. It develops something that looks like an understanding of concepts, reasoning, tone, and nuance.

Nobody explicitly taught it how to write a legal memo or solve a logic puzzle. These abilities emerged as side effects of learning to predict text well. Researchers call them emergent capabilities. They are part of why the field surprised even the people building it.

Models are described as having 70 billion parameters, or 1.8 trillion. Those are the dials inside the machine that training adjusts. Each parameter is a numerical value the system learned to set.

More parameters generally means a more capable model, but it is far from the only thing that matters. The quality of the training data matters just as much. The techniques used during training matter just as much. A well trained smaller model can beat a sloppy larger one most days of the week.

There is one more piece of the architecture worth knowing. Modern LLMs are built on a design called the transformer. Google researchers first described it in 2017. The transformer lets the model pay attention to different parts of a long passage at once.

The old approach was to read word by word. The new approach changed what these systems could do. You do not need to know how it works in detail. You only need to know that almost every LLM you have heard of sits on top of the same transformer idea.

What an LLM is good at

Large language models are strong at anything that involves manipulating or generating language. Writing, summarising, explaining, translating, drafting code, answering questions, planning, editing, brainstorming. They are also unexpectedly good at step by step reasoning. That is not something you would obviously predict from a system built on word prediction.

Give them room to think out loud. They will often work through a problem in a way that looks uncannily like how a patient human would do it. This is why prompts that say “think step by step” tend to produce better answers. The model is following the grain of the way it was trained.

They are also strong at format. Ask for a table, a bulleted list, a polite email, or a thousand word essay. The model will hit the shape you asked for. That ability to follow instructions about form and length is one of the reasons these tools became useful in real work.

Where a large language model falls short

LLMs are weak in ways that matter. They hallucinate. This is the term for when an LLM produces text that sounds confident and fluent but is simply wrong. It is not lying in any meaningful sense.

The model is generating the most statistically plausible response given what it learned. Sometimes the plausible response is not the accurate one. For anything where accuracy counts, you verify what the model tells you.

They also have a knowledge cutoff. They know what was in their training data up to the month training ended. They know nothing after that, unless they have been given a tool to search the live web. Ask a model trained last year about a court ruling from last week and you may get a confident invention.

There is a common misconception worth clearing up. People often assume an LLM looks things up in a database, like a very fast search engine. It does not. Unless it has been given a search tool, the model is generating from patterns it absorbed during training.

This is why UK banks, law firms, and NHS trusts now route their LLMs through retrieval systems. Those systems pass in real documents before the model answers. That keeps the language quality without inviting the model to make up facts. The technique is called retrieval augmented generation, often shortened to RAG.

A practical UK example

Imagine you run a small UK accountancy firm and want an LLM to draft client letters. It will do a fine job of producing clear, polite, professional prose. Now ask it for the current rate of Corporation Tax. Or ask it for the exact income thresholds for Self Assessment penalties.

It may give you last year’s numbers or hallucinate entirely. The fix is simple. Paste the current HMRC guidance into the chat and ask the model to draft the letter using that text.

The language skill is the part you want. The facts you supply yourself.

The same pattern holds across professional services. A solicitor uses the model for the prose and brings the case law. A surveyor uses it for the report structure and brings the measurements. A GP practice uses it to draft patient letters and brings the clinical detail.

The model never replaces the expertise. It compresses the writing time around the expertise. That is often where the working day actually goes.

The stack of tricks around a modern LLM

It helps to know a little about the layers around the raw model. Reinforcement Learning from Human Feedback, usually shortened to RLHF, is where human raters score the model’s answers. The model is then nudged towards the ones people prefer.

System prompts, which you never see, tell the model how to behave. Safety filters block certain categories of request. Retrieval systems quietly fetch documents before the model answers. Tool use lets the model call a search engine, run code, or query a database.

Most of the gap between a raw LLM and a helpful assistant is made up of these layers. The change is rarely a bigger model. It is usually a smarter system around the model. The original InstructGPT paper is still the clearest public description of how RLHF turns a raw prediction engine into something polite and useful.

The cost question that quietly matters

The other thing worth understanding is cost. Running a large language model is not free. Every response is generated on expensive hardware in a data centre. The bigger the model, the higher the cost per answer.

This is why many AI products now mix models. A small, fast, cheap model handles routine questions. A larger, slower, more capable one is called in when the question needs it.

For a UK business building on these tools, the real cost question is not the headline price per message. It is how you route work to the right model at the right time. Get the routing right and the running cost is a fraction of what people expect. Get it wrong and you can burn through a marketing budget answering trivial questions with the heaviest model in the lineup.

The same logic applies to consumer subscriptions. The premium tiers are not just unlocking smarter answers. They are giving you a higher cap on the expensive model behind the scenes.

The practical takeaway

Treat a large language model as a brilliant generalist writer with a patchy memory and no access to today’s news. Use it for the writing, the structure, the explanation, the brainstorming, the editing, and the translation. Bring the facts to it when they matter. That division of labour is where these systems earn their keep.

If you want to go a layer deeper, the post on what artificial intelligence actually means sets out the broader vocabulary. The post on machine learning in plain English explains the engine room a level below the chat window. Together they make it easier to tell signal from sales pitch when someone tries to convince you their product is powered by AI.